ASR

Speech recognition

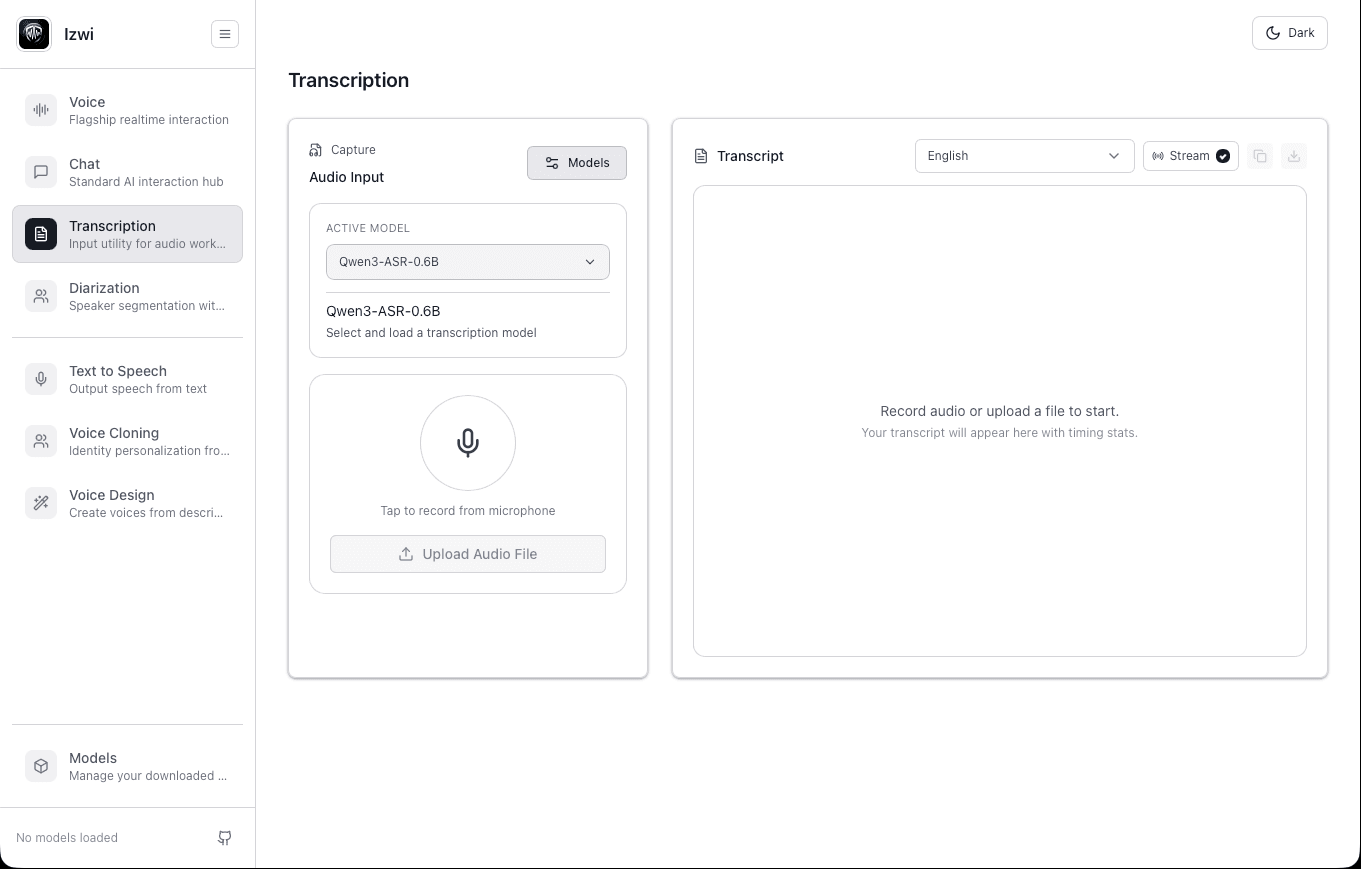

Transcribe audio locally with a clean API surface and a deployment path you can keep.

Voice AI runtime

Izwi gives teams one local-first runtime for speech recognition, text to speech, diarization, and voice workflows. Start on a laptop, integrate through OpenAI-compatible /v1 endpoints, and move into controlled deployment when the workflow becomes real.

Open source for evaluation. Commercial packaging for production deployment.

Most teams do not need six separate voice tools and six separate integration stories. They need one runtime that can handle the main speech workflows without sending audio to a third-party cloud.

Core runtime

Instead of stitching together separate vendors for transcription, synthesis, speaker separation, and voice orchestration, Izwi keeps the core stack close to the work and behind one deployment boundary.

Surface area

One API

One runtime shape for local evaluation and controlled deployment.

Data path

No relay

Audio stays inside your environment instead of bouncing through a hosted middle layer.

ASR

Transcribe audio locally with a clean API surface and a deployment path you can keep.

TTS

Generate speech inside your environment instead of routing every request through a hosted vendor.

DIAR

Separate speakers in meetings, interviews, and call recordings without moving the audio out.

STACK

Combine transcription, synthesis, and conversational layers in one local-first stack.

VOICE

Support custom voice workflows where policy and product fit allow it.

Use Izwi in layers. Explore on desktop, integrate through the runtime API, and move into supported deployment when the workflow matters.

Explore

01Use the desktop app to test models, prototype workflows, and explore the product locally.

Integrate

02Run the server, point your app at /v1, and integrate without rebuilding your whole stack.

Deploy

03Move to supported builds, deployment guidance, version pinning, observability hooks, and commercial support when the workflow matters.

Izwi is a strong fit when privacy, latency, cost predictability, or deployment boundaries make hosted voice APIs a bad answer.

Best fit

Embed a private voice layer into regulated or customer-controlled workflows. Izwi is strongest when voice is becoming infrastructure rather than a lightweight SaaS add-on. The teams above need control over where the runtime lives, how the integration behaves, and what support path exists when the workflow becomes operational.

Operational fit

Replace cloud speech dependencies with a runtime you can deploy and govern.

Edge fit

Run voice features where connectivity is weak, expensive, or not allowed.

Izwi keeps the migration story simple. If your team already works with OpenAI-style APIs, the path into a private runtime should feel familiar.

Example

Keep a familiar client, keep the same /v1 shape, and move the runtime closer to the work instead of pushing audio farther away.

Example: point the OpenAI client at Izwi

/v1from openai import OpenAI

client = OpenAI(

base_url="http://localhost:8080/v1",

api_key="not-needed",

)

transcript = client.audio.transcriptions.create(

model="qwen3-asr-0.6b",

file=open("audio.wav", "rb"),

)Keep the public story aligned to how Izwi actually sells.

Commercial motion

Izwi should feel simple to try and specific to buy. The website needs to make that transition clear instead of treating every visitor like they are already in the same stage.

Phase 01

Download the open-source runtime and test the workflow on your own hardware first.

Phase 02

Scope one workflow in one target environment and decide what production really needs.

Phase 03

Move into Enterprise Runtime, Private Cloud, or OEM when the boundary conditions are real.

Run the product locally. Inspect how it works. Integrate it through a familiar API. Deploy it where the work already lives.

The open-source path is the fast way to evaluate and integrate.

Controlled production deployment belongs to the Enterprise Runtime story.

The runtime, desktop app, and commercial packaging are related, but not identical, product surfaces.

Guardrails

Not another cloud voice API with private deployment added later.

Not a vague AI platform that blurs every surface into one promise.

Not a collaboration-suite story when the real value is deployment control, support, and trust.

Start locally if you are exploring. Talk to us if you already know the boundary conditions.